The Anatomy of Crashes in NYC

We’re only human, right!?

It’s a fact that 95% of car collisions are caused by human error. The autonomous vehicle has been heralded as the future road-messiah that will take the wheel and save us from self-inflicted pain and loss. However, it’s important to remember that it will take roughly a half century to replace the 1.2 billion vehicles that are on our roads today with the driving pods of tomorrow. So, the question remains — how do we better understand and prevent car crashes in our lifetime? Can and how do we reduce the 1.2 million annual global road casualties by 50% in 5 years? Call me a dreamer, but I think it can be done by leveraging technology and thinking differently about road safety. I believe this because I see this potential everyday at Nexar.

Nexar is the connected-driving technology company on a mission to rid the world of car collisions and road casualties. Our journey by building an artificial intelligence (AI) dashcam app that works on any smartphone. We started introducing our app to individual drivers and then networks like Uber and Lyft drivers until our network of app users grew to hundreds of thousands of drivers across metropolitan areas, such as New York City and San Francisco. All of these dashcams are connected in one unified vehicle-to-vehicle (V2V) network that provides collision avoidance alerts to protect drivers, cyclists and pedestrians on the road. To provide these services, the network collects a great deal of video and telemetry driving information — over 50 million recorded miles of driving video today.

Having gathered mountains of this data, we decided to analyze it and see if we could find any useful insights about the anatomy of car crashes. I sat down one afternoon with Dr. Lev Reitblat, Nexar’s lead data scientist. Together we looked at collisions that took place in the NYC area over the past 12 months — here’s is what we’ve discovered.

Crashes Under the Magnifying Glass

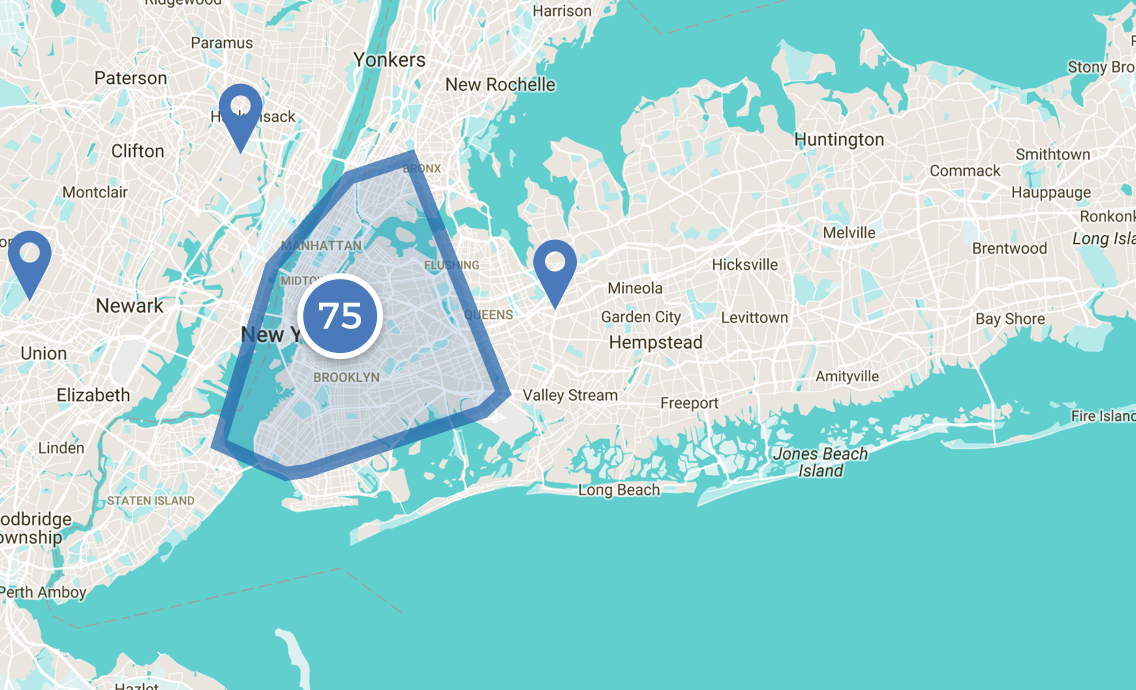

Lev and I looked at about 13 million miles of driving over the last 12 months with a focus on professional drivers — those in rideshare, taxi, and fleet driving. This dataset included about 1 million hours of driving containing 87 unfortunate crashes that were automatically detected by dashcams in our network. In other words, this dataset showed 150 thousand miles of driving on average in between crashes, and an overall average driving speed of 13 mph.

First, we observed that within Manhattan Island and its boroughs, most accidents happened within urban areas — with a high concentration in midtown Manhattan.

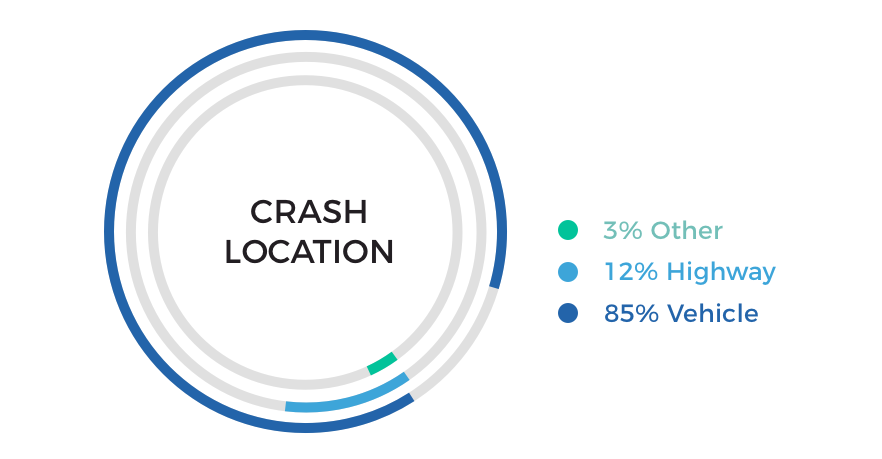

We then looked at which types of roads the crashes occurred on and found the vast majority occurred on urban roads rather than highways.

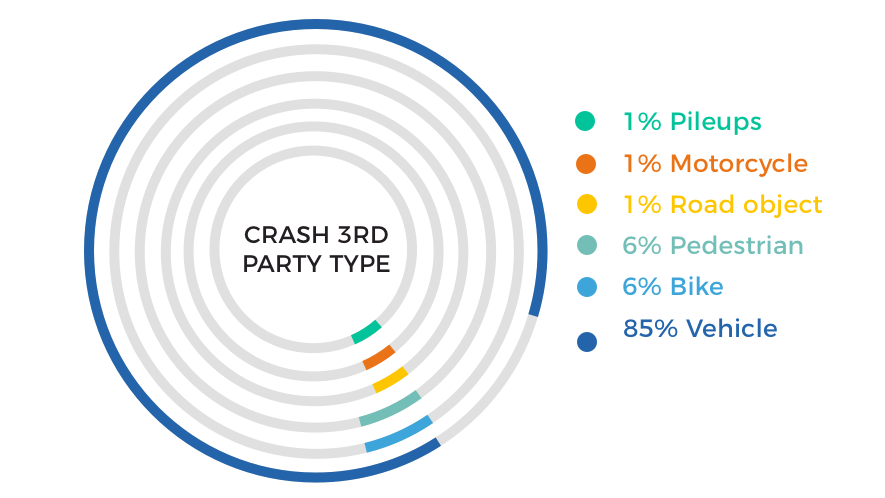

Layering video recordings on top of telemetry data (as accelerometer data) for these crashes helped us learn more about each individual crash. One of the findings was the identity of the parties involved in the collisions. Most of the data (85%) consisted of crashes involving two vehicles, and collisions involving pedestrians and cyclists tallied up to 6% each.

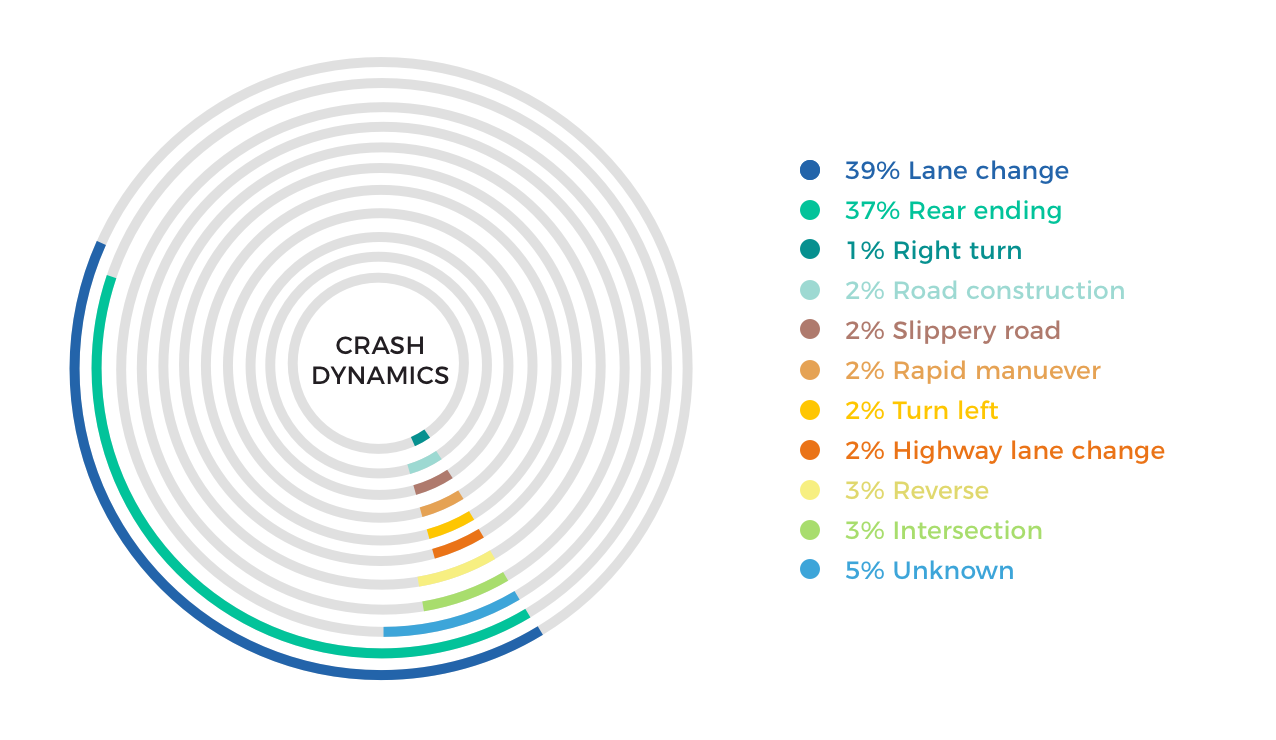

When analyzing the crash videos and motion dynamics, we found the lion’s share involved lane-changing on urban roads — a bit of a surprise to me. This could be a result of urban traffic driving friction in NYC. In a non-quantitative way, we could also tell — from the videos — the car damage sustained in these crashes was much more severe than in rear-ending crashes and required major body work.

Digging into Tougher Questions

Wanting to dive deeper and answer more complex questions about drive profiling and crash predictability, I asked Lev for more insight into the drivers’ contributions to each of the crashes.

To help answer this question, we defined a rule-of-thumb definition of crash contribution based on applying machine learning over the dataset. We decided that for the purpose of this study, if a driver was rear-ended or cut off abruptly while being in linear motion or standing still, he or she did not contribute to the crash. Similarly, if the driver rear-ended someone else or cut off someone else abruptly, he or she fully contributed to the crash. In all other cases, shared contribution was assumed. With those parameters in mind, we looked at two additional driving indicators in our dataset. Of 400,000 driving events in the same cohort, which included hard brakes, harsh cornering, rapid accelerations, and “near collisions” which numbered 28,000 for the same population. The data set that included on top of the 87 crashes, also contained 400,000 “driving events” in the same cohort, which included hard brakes, harsh cornering, rapid accelerations, and “near collisions” which numbered 28,000 for the same population.

The primary finding was that crashes with “contributing drivers” were highly correlated with past “near misses” and rough driving styles. We found drivers who contributed to the crashes had:

- 26% more emergency braking events than the group’s average

- 55% more near-collision incidents in total than the group’s average

- 90% more near-collision incidents with contribution than the group’s average

This is a useful insight that at the very least indicates the potential of vision-telematics. There is an innovative and evolving ability to profile driving behavior risk by using longitudinal artificial vision intelligence. This could provide more holistic context to driving events, incentive to re-educate and test drivers, and better predict driver risk for insurance applications and coverage.

Vision Telematics: Creating The New Driver Scorecard

The auto insurance telematics industry has long been dependent on dongles and onboard devices (OBDs) that collect location and motion telemetry from the vehicles, at a rate of about one observation per second. Vision telematics adds forward-facing driving video on top of that, which opens up a new vector of telematics.

Imagine you’re sitting in the passenger seat with your eyes closed and you’re assessing the driver next to you. Now, imagine doing the same thing with your eyes open. Can we agree having vision would enable you to make better judgement of the driver’s qualities? The same holds true with adding vision to telematics.

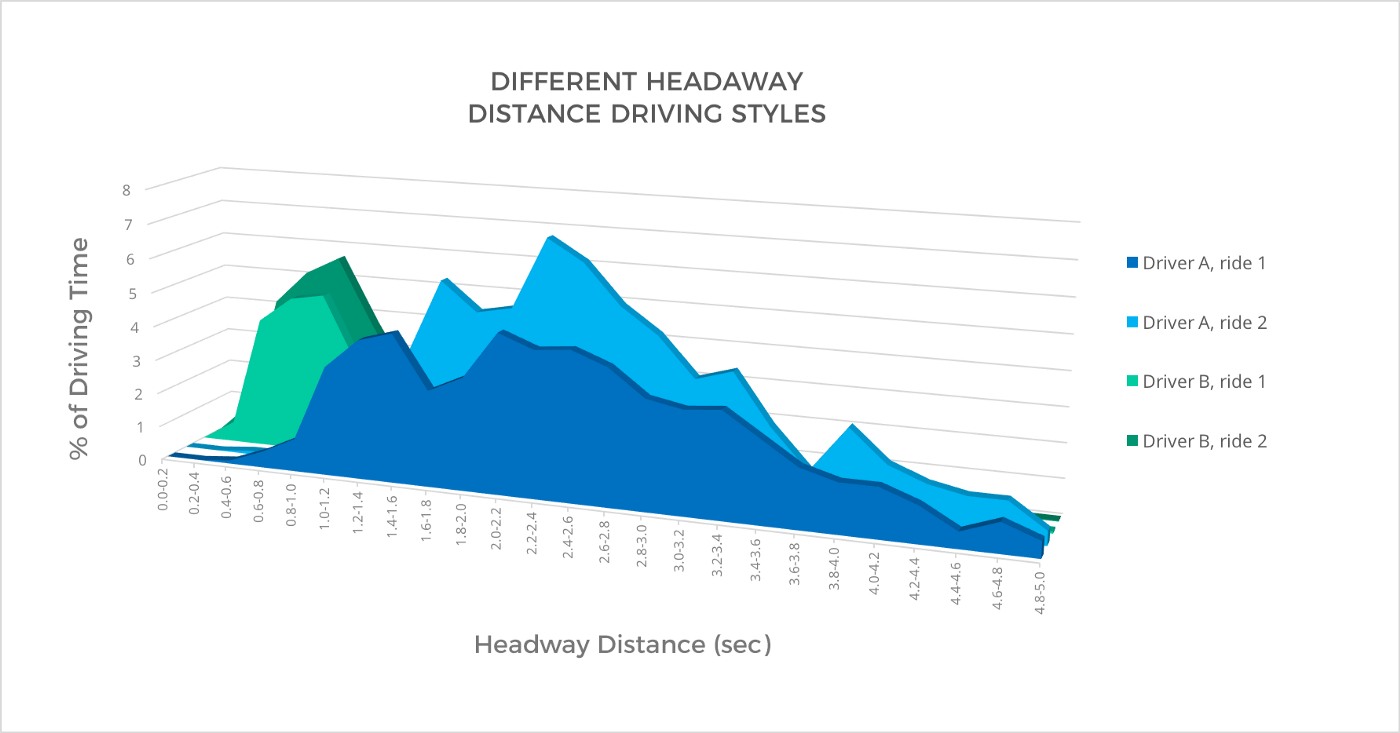

One way in which this new technology could be applied is in headway distance keeping. When I was a young driver, my father used to reprimand me for not keeping my distance from the car in front of me. With vision telematics, headway distance becomes a known — highly visible — metric of driving behavior for both a driver and an insurance company. I asked Lev for a point-in-time sample of two random drivers commuting to work over a similar route and how they keep their distance in two separate drives. We can see in the graph below that the percentage of time each driver spends within a certain distance from the car in front of him is very different. Of note, the headway distance was divided by speed and is represented in seconds.

It is common knowledge that not keeping a safe braking distance away from the car in front of you is a good predictor of crash probability, given it reduces the time you have to react to the cars in front of you. With vision telematics, that distance you keep can — for the first time ever — be accurately measured.

So, What Does All This Mean?

Lev and I had a fun afternoon diving into all this data — and virtually driving all over New York. We could see the power these datasets and insights hold in making city traffic smarter and safer, informing auto insurance actuaries, and — most importantly — in helping save lives on the road. Coming back to the vision I opened with — it IS possible to reduce car crash casualties by 50% in 5 years. That is, pending two conditions: a complete indexing of all driving and an Advanced Driver Assistance System (ADAS) installed in every car.