NEXET — The Largest and Most Diverse Road Dataset in the World

Nexar recently released the largest and most diverse automotive road dataset for researchers in the world. We’d like to provide some context on the evolution of autonomous driving perception and why we’re giving everyone access to our data.

For 30 years, neural networks have been the black sheep of computer vision, an interesting concept without any realistic implementations. In the past 5 years, however, we’ve seen a tremendous leap forward in the effectiveness of deep networks. As increasingly advanced algorithms are created, more and more resources are allocated to advanced neural networks research.

What triggered the rise of deep learning? One could attribute it to the availability of processing power (i.e., GPU) or theoretical improvements (i.e., ReLU, Dropout, data augmentation), but a more fundamental change was the release of a large-scale labeled dataset, ImageNet. We believe this contributed significantly to the deep learning boom the world is experiencing today.

As neural networks grew and deepened, the need for proper benchmarking also arose. Researchers required methods that could compare deep network results in a reproducible manner. Today, the computer vision field relies on these benchmarks for research — each paper must show results on one or more benchmarks to be considered for publication.

Good benchmarks helped researchers and developers solve specific problems but at the same time have created research effort biases in directions dictated by the data.

Undoubtedly, one of the industries that received the biggest push from the revival of deep learning was the autonomous vehicle industry. What was barely feasible 10 years ago now seems within reach. The automotive industry is no different in its need for data to train deep learning networks. Several public datasets have been released for this purpose: Caltech pedestrian detection benchmark (2009), TME motorway (2011), KITTI vision benchmark suite (2012), TuSimple (2017), and Mappilary Vistas dataset (2017) are good examples.

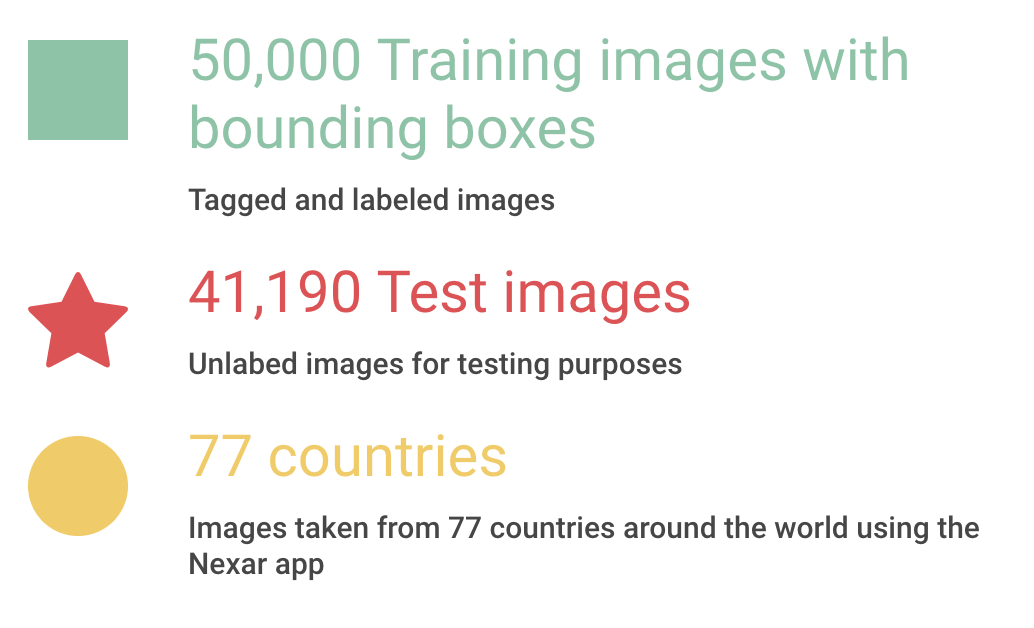

The NEXET Dataset

At Nexar, we’ve always prioritized collecting as much road data and imagery as possible. We understand a deep learning system is only as good as the data you feed it. Over time, we’ve seen an enormous variety of roads, cars, incidents, and accidents. We’ve seen roads in deep Scandinavian snow as well as under the scorching Middle Eastern sun. We’ve seen luxury cars and horse-powered wagons. We’ve sadly seen car crashes, motorcycle crashes, bicycle crashes, and pedestrians getting hit. We can now supply our deep networks with mountains of that data to detect dangerous situations on the road and provide drivers with real-time, accurate warnings.

But we don’t want to just keep all this data for ourselves...

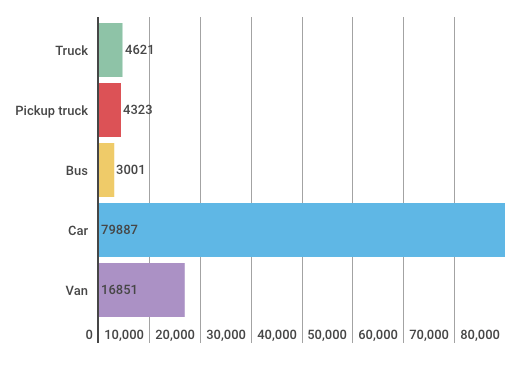

Our mission here at Nexar is to save lives by helping to reduce the number of car collisions. To this end, we released a dashcam application for smartphones (both iOS and Android) which records traffic in front drivers and uses AI to warn them of imminent collisions. This latter feature is called the Forward Collision Detector. This detector uses a pipeline of algorithms beginning with accurate detection of the rear of relevant cars in front of a driver. The purpose of making the NEXET dataset available is to accelerate the development of deep learning networks that accurately detect the rear of cars up ahead.

Diversity is Key

At Nexar, we collected a diverse dataset to support the construction of robust detectors and accurate labeling of the images. We’ve aggregated images and videos taken in different lighting conditions, weather conditions, and geographical locations. We label only the rear part of nearby cars which could become involved in a crash. The NEXET dataset was carefully collected by sampling our enormous road database and curated to address as many situations as possible.

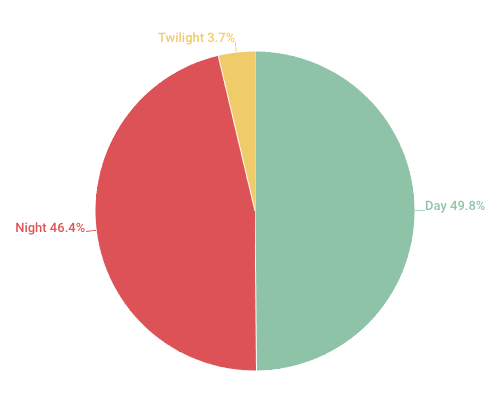

A good deep network is dependent on the data you feed it. There is no point in developing a detector that performs marvelously on day images but fails at night and a diverse dataset is crucial in order to achieve robustness. NEXET was built with diversity in mind.

We expect this dataset to be useful for developers and provide deep learning systems with the amount and variety of images they need in order to produce high quality, accurate results in object detection. In particular, NEXET can be used for research in domain adaptation, day/night or different geographical regions. Below is a bit more information about the dataset and what it provides the industry.

Lighting Conditions

Distribution of Automotive Classes

Geographic distribution

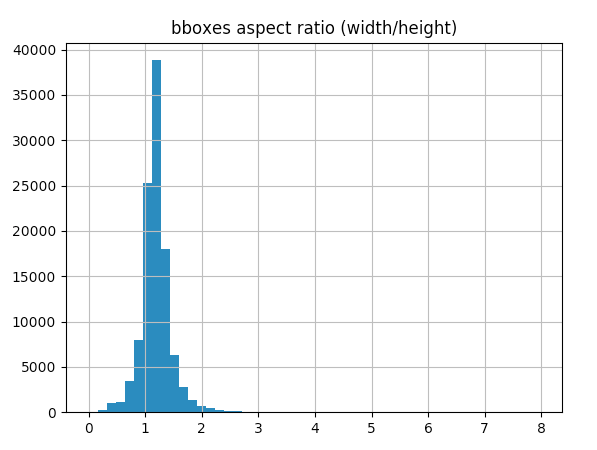

Distribution of Aspect Ratio

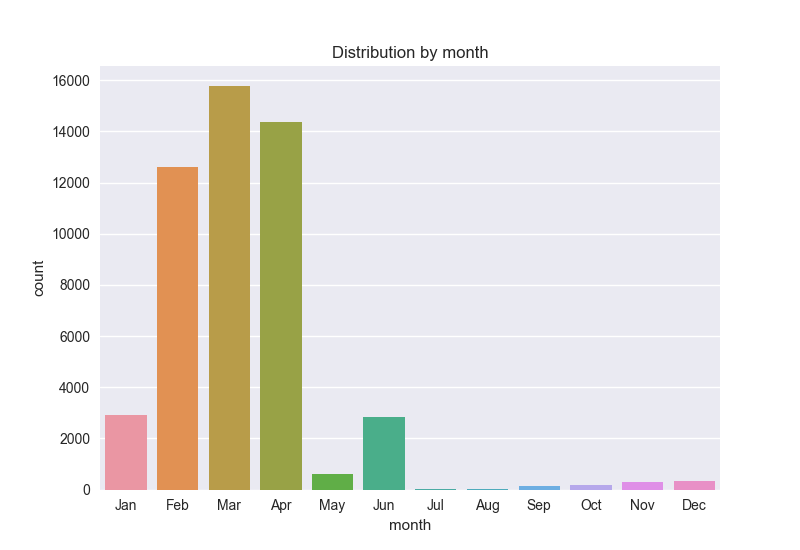

Distribution Over Time

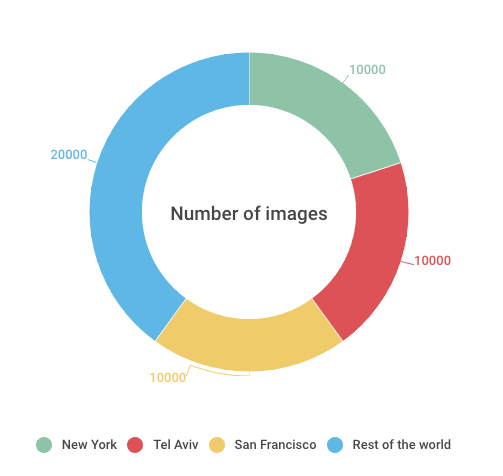

Distribution Across Cities

Two Examples of Daylight Conditions

Two Examples of Nighttime Conditions

Two Examples of Dusk, Dawn or Twilight Conditions

Nexar’s Latest Challenge to Developers

Along with the NEXET dataset, we released a challenge to developers in using this dataset for object detection. We want to collaborate with the best in the industry to develop driving perception that works in all-weather, all-road, all-terrain, all-geography and all-camera environments. The industry needs to grow beyond its current functionality and perceived road or driver conditions to sustain level 4 & 5 autonomy.

Whether a developer or other company within the autonomous driving space, we encourage you to freely download the dataset at nxr.cm/challenge2.